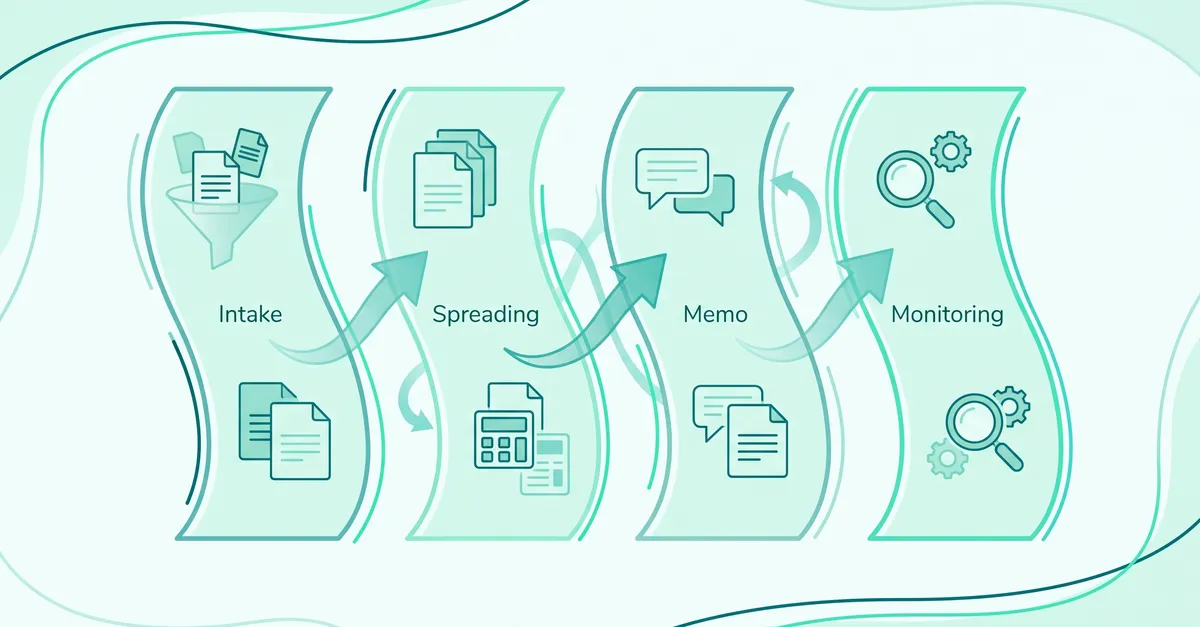

Community banks looking at AI for commercial lending should focus on where AI actually adds value: document collection and intake, spreading and tax return analysis, credit memo and analyst workflow, and portfolio or covenant monitoring. That is the map that matters. Chatbot demos and core-banking modernization projects are different categories, and usually a distraction when the real problem is slow commercial underwriting.

That distinction matters because the vendor landscape is noisy. Names like nCino, Abrigo, Ocrolus, SecureLend, and Aloan get compared as if they do the same job. They don't. The better question is not "which bank AI platform is best?" It is "where does my team lose time, and what class of tool fixes that bottleneck without creating a new governance problem?"

For most community banks, the answer is not borrower-facing AI theater. It is analyst throughput. A clean business return still takes meaningful time to spread, and a messy Form 1065 with layered ownership and K-1 attachments creates heavy manual work before anyone applies credit judgment. That is where AI earns its keep.

If you want the broader operating model, the AI-Assisted Underwriting Playbook and the guide to AI underwriting use cases already in production are the right companions. This page stays focused on where AI actually helps.

Where does AI add value in community bank lending?

| Category | Best fit | Tradeoffs | Examples |

|---|---|---|---|

| Document collection and intake | Banks with incomplete borrower packets, too much chasing, or inconsistent request lists. | Often strong on portal workflow and weak on downstream credit analysis. | nCino, Abrigo, SecureLend |

| Spreading and tax return analysis | Banks losing analyst hours to 1040s, 1065s, 1120-S returns, K-1 tracing, and reconciliation. | Generic document AI handles easy files but often breaks on multi-entity commercial work. | Ocrolus, Abrigo, nCino, Aloan |

| Credit memo and analyst workflow | Teams that already understand the credit and need cited writeups, exception handling, and standardized review output. | Memo-first tools are weak if the underlying spread and source trace are weak. | Aloan, nCino, Abrigo |

| Portfolio and covenant monitoring | Banks with growing annual review queues, covenant testing drift, or missed reporting deadlines. | Post-booking value is real, but it usually comes after intake and underwriting controls are in place. | Abrigo, nCino, Aloan |

That table looks simple because it should. The mistake is evaluating every vendor as if it is trying to do the same job. It is not. Some vendors are really origination systems with some AI attached. Some are document data layers. A few are trying to own the analyst workflow itself. If you skip that distinction, the demo process turns into nonsense fast.

Which category should a community bank start with?

Start where the analysts are burning hours, not where the demo looks flashy. In most commercial shops that means spreading, tax return analysis, and the cross-document review that surrounds them. The playbook material behind this page makes the pattern obvious: clean returns still take real analyst time, multi-entity 1065 packages expand that effort fast, and complex borrower groups can consume most of a day before the memo even starts.

That is why borrower-facing AI theater is usually the wrong first purchase. A chatbot that answers borrower questions does not help if the packet is still incomplete. An AI memo writer does not help if analysts still spend a day rebuilding the financials underneath it. A fancy portal does not help if the underwriter still has to trace every K-1 manually after the upload is done.

Practical sequence

- Fix spreading and document analysis first.

- Then standardize memo and exception workflow on top of cited data.

- Then extend into covenant monitoring and annual review.

- Treat intake and portal tooling as a separate decision unless it is the clear bottleneck.

If your bank is specifically trying to solve the spreading problem, go deeper on financial spreading software and what loan spreading software actually is. That is the part most community banks underestimate.

What fits in document collection and intake?

This category is about getting a complete file into the bank with less back-and-forth. The job is request generation, checklist management, borrower upload, and routing. If your lenders are losing days to document chasing, this matters. If packets arrive complete and the delay starts after that, this is not your first purchase.

nCino and Abrigo are broad lending platforms whose strength in this category is workflow coverage: checklists, portals, routing, and the deal pipeline around them. SecureLend and similar intake-first tools tend to pitch front-end speed and borrower experience. All of them can help if incomplete packets are the real bottleneck.

What they do not do is underwriting depth. A complete intake workflow does not produce a credible global cash flow, a cited memo, or a defensible exception history on its own. Buyers should be blunt about that distinction before treating intake tooling as an underwriting solution.

What fits in spreading and tax return analysis?

This is the category most community banks should care about first. It covers document classification, extraction, mapping into the spread, K-1 tracing, cross-document checks, and the analyst review path that turns raw files into usable credit analysis.

Ocrolus is the clearest example of a strong document-AI layer, good at extraction and document handling. But extraction alone is not a commercial underwriting workflow. On the other end, spreading modules from platform vendors can store a spread and help with workflow, yet the hardest commercial files still fall back to manual handling. That gap is what AI-native underwriting overlays like Aloan are built to close: a cited spread produced directly from the source documents, with K-1s, footnotes, and related-party entries traced automatically so the analyst reviews the work instead of rebuilding it.

The test here is not whether a tool can read a clean 1040. Almost anything can. The test is whether it can handle a borrower group with multiple entities, non-standard statements, related-party debt, and ugly K-1 logic without losing the source trail. If the tool gets shaky the moment the packet stops looking like a training demo, you do not have dependable underwriting automation.

What good looks like: every extracted number ties back to a source page, every override is preserved, and the analyst reviews the AI's work instead of rebuilding it. That standard matters just as much for examiners as it does for speed.

If this is your bottleneck, the closest companion reads are how to automate global cash flow analysis and the buyer's page on AI underwriting platforms for community banks.

What fits in credit memo and analyst workflow?

This bucket matters once the data layer is trustworthy. The real job is not "write a memo with AI." It is standardize exception handling, preserve analyst judgment, and turn cited financial analysis into a memo framework the underwriter can actually use.

Aloan sits squarely in this layer because it is designed around the underwriter's working surface — cited analysis, exception handling, and review — not just the extraction step. nCino and Abrigo have also pushed AI assistant language into the memo workflow. Those can help, but banks should be skeptical of any memo demo that cannot show the source numbers underneath it. Memo generation is downstream. If the spread is weak, the memo will just be wrong faster.

This is where a lot of AI theater shows up, because the output looks impressive in a boardroom. Thirty seconds to a polished narrative feels magical. It is also the easiest thing to fake. A senior credit officer should ask a ruder question: show me the path from the memo back to the 1065, the K-1, the footnote, and the analyst override history. If that chain is weak, do not buy the memo layer yet.

For a narrower look at this layer, the relevant solution page is AI credit memo generation. Just keep the sequence straight. Memo tooling should sit on top of solid analysis, not replace it.

What fits in portfolio and covenant monitoring?

Once the loan is booked, the same manual pattern shows up again: financials arrive late, covenant math lives in spreadsheets, annual reviews drift, and deterioration gets spotted later than it should. This is a separate buying motion from origination, but it belongs in the same map because the best vendors are starting to connect the two.

Abrigo has obvious credibility here because portfolio and risk infrastructure have long been part of the product story. nCino has pushed into the same territory. Aloan extends the same cited document logic used in underwriting into covenant testing and annual review, so banks keep one evidence trail from origination through monitoring instead of running two systems with different logic.

Still, this is rarely the first place to start unless post-booking workload is already the loudest pain. If your analysts are drowning in new-loan spreads, fix that before you automate quarter-end surveillance.

How should community banks evaluate vendors in this category?

Three questions sort the market quickly.

- Is this an overlay or a replacement? nCino and Abrigo pull you toward broader platform decisions. Aloan is positioned as an underwriting overlay you add without replacing the LOS or core. That difference drives timeline, cost, and change-management risk more than almost any feature comparison.

- Can it handle real commercial documents? Ask for 1040s, 1065s, K-1s, non-standard statements, and a borrower group with layered ownership. If the vendor pivots to a clean demo file, you learned something.

- Can an examiner follow the evidence trail? Source-page citations, override logs, exception history, and human sign-off are not nice extras. They are the difference between useful automation and a future cleanup project.

The fourth question is more strategic: what problem are you actually solving this year? If the honest answer is turnaround time, spreading automation and analyst workflow deserve priority. If the honest answer is borrower friction before application completeness, then intake tooling may come first. Just do not let a shiny AI story erase the actual bottleneck.

The governance side matters too. The right standard is laid out in the examiner readiness guide: human decision authority, explainability, and audit trail. Those are not separate from product evaluation. They are product evaluation.

The short answer

If you ask "what are the best AI tools for community bank lending," the honest answer is that there is no single list without context. There are four different tool categories, and they solve different problems.

nCino and Abrigo matter when the buying decision is broader workflow and origination infrastructure. SecureLend and similar intake-first tools fit when borrower-side friction is the loudest pain. Ocrolus is the document-intelligence layer. Aloan is built for the bottleneck most community banks actually have — commercial underwriting itself, especially spreading, cited memo prep, and the analyst workflow between them.

Start with the analyst bottleneck. That is where the hours are, where the ROI is cleanest, and where the institution learns fastest. Everything else can come after the file moves faster and the work still holds up under review.