Every time we talk to a bank about AI-powered underwriting, the first real question is some version of: "What happens to our loan data?"

Fair question. If you're a community bank or credit union evaluating AI tools for your lending operation, you should be asking it. The problem is that most vendors answer with marketing language - "bank-grade security" and "enterprise-ready" - without telling you what's actually happening at the infrastructure level.

So let's get specific.

The "Training" Question

The single biggest concern we hear: "Will our borrower financial data be used to train your AI?"

No. Both of our AI providers — Google and AWS — contractually guarantee it.

Aloan uses Google Cloud Vertex AI and AWS Bedrock for AI processing. Both are enterprise API services used widely across the financial services industry. Both providers offer contractual guarantees that customer inputs and outputs are never used to train, improve, or fine-tune foundation models.

This isn't a pinky promise. It's in the service agreements. Google documents their data governance commitments. AWS documents theirs. Your data goes in, gets processed, results come back, and nothing is retained.

There's a meaningful difference between using AI through a browser chat window and using enterprise API services with data governance agreements. The enterprise API path — which is what Aloan uses exclusively — comes with contractual commitments around data handling, retention, and training exclusion that consumer-facing products don't guarantee by default.

| Factor | Consumer AI (ChatGPT) | Enterprise AI APIs (Vertex AI, Bedrock) |

|---|---|---|

| Data retention | May be stored and reviewed | Deleted after processing |

| Training on your data | Possible unless opted out | Contractually prohibited |

| Encryption | TLS in transit | TLS 1.2+ in transit, AES-256 at rest |

| Audit trail | None | Full — every output traced to source |

| Examiner documentation | Not available | Built-in compliance reporting |

| Data isolation | Shared infrastructure | Isolated processing environments |

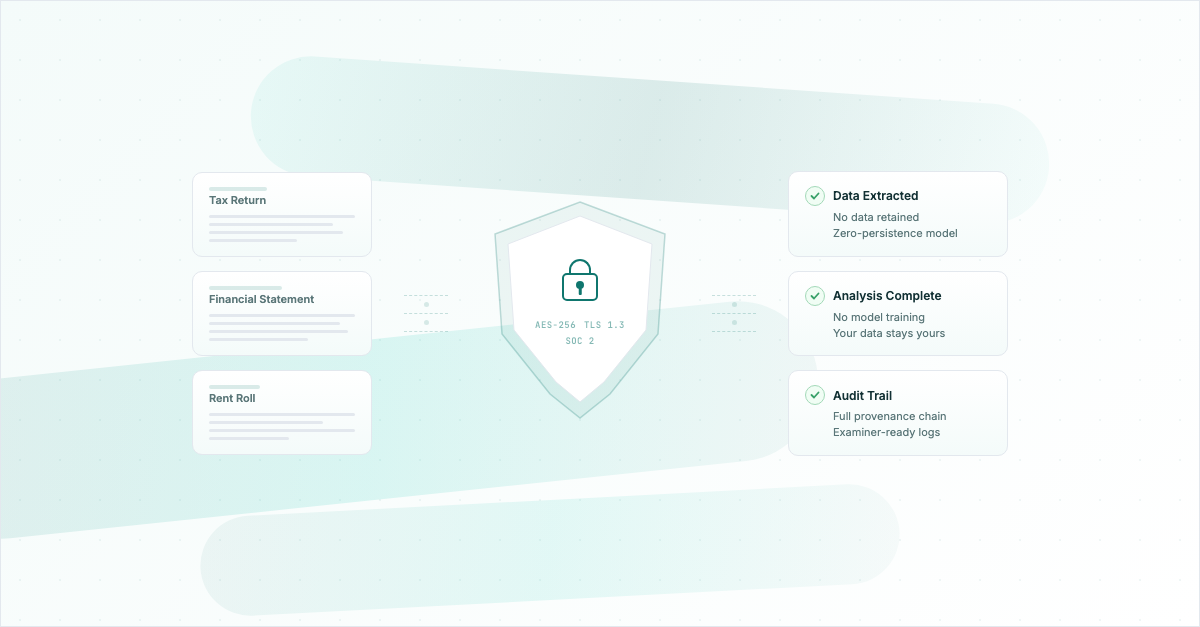

What Happens When a Loan Doc Hits Our Platform

Here's the actual data flow when your analyst uploads a set of tax returns, bank statements, and financials into Aloan:

- Upload and encryption. Documents hit our platform over TLS-encrypted connections and are stored encrypted at rest on US-hosted infrastructure.

- AI processing via encrypted API calls. When we need AI to extract data, classify documents, or generate analysis, we make encrypted API calls to Vertex AI or Bedrock. The model processes the request and returns results.

- Zero retention by AI providers. After processing, neither Google nor AWS retains your data. No copies, no caches, no training queues. The model provider has no access to your data after the API call completes.

- Results delivered to the user. The extracted data, spreads, risk flags, and credit analysis are assembled in the platform and presented to your lending team with full source-document traceability.

At no point does a third party have access to your loan documents. The AI providers don't store them. They don't see your account. They don't know who you are. It's a stateless API call - in, process, out, gone.

The Examiner Conversation

If you're a regulated institution, the question isn't just "is this secure?" - it's "can I defend this to my examiner?"

We built for that. Aloan's architecture aligns with three frameworks that examiners care about:

- FFIEC guidance on technology risk management - covering controls for third-party technology services, information security, and business continuity planning.

- OCC 2023-17 (Third-Party Risk Management) - the updated interagency guidance on managing risks from third-party relationships, including fintechs. Our documentation is structured to fit directly into your vendor management process.

- SR 11-7 (Model Risk Management) - the interagency statement on model risk. This is the big one for AI. Every output Aloan generates traces back to source documents. Your examiner can pull any number in a credit memo and see exactly which page of which tax return it came from.

The traceability piece is worth emphasizing. When an examiner samples a loan file that used AI-assisted underwriting, they need to be able to verify the work. If the AI says DSCR is 1.35x, the examiner needs to see the income and expense numbers that produced it, and where those numbers came from in the borrower's financials. Aloan provides that chain from output to source document - not just for the examiner, but because your credit analysts need it too.

Platform and Infrastructure Certifications

Aloan is SOC 2 Type II certified. An independent auditor evaluated our security, availability, and confidentiality controls over an observation period — not just a snapshot — and the resulting report is available to customers and prospects under NDA through our Trust Center. If you're building a vendor management file, this is usually the first thing your risk team asks for.

Our AI inference providers — Google Cloud (Vertex AI) and AWS (Bedrock) — maintain:

- SOC 2 Type II - independent audit of security controls, availability, and confidentiality

- ISO 27001 - international standard for information security management

- FedRAMP - federal cloud security authorization

- HIPAA eligibility

- GDPR compliance

We can provide our SOC 2 Type II report, documentation of our security controls, our providers' certifications, penetration test results, and data flow documentation as part of your vendor due diligence. Most of it is waiting for you in the Trust Center — this isn't something you have to pry out of us.

What We Don't Do

Sometimes the clearest way to explain a security posture is to list the things you won't find:

- We don't use your data to train models. Ever.

- We don't sell, share, or monetize customer data.

- We don't use consumer AI services (no ChatGPT, no browser-based tools).

- We don't retain data longer than needed to provide the service.

- We don't give AI providers access to your account, your data, or your identity.

The Real Risk Calculation

Here's what we think the honest conversation sounds like: Yes, using AI in underwriting introduces a new vendor and a new technology into your process. That's a real consideration that deserves real due diligence.

But the alternative isn't risk-free either. Manual spreading has error rates. Analysts miss things when they're processing their twentieth deal of the month. Document collection takes weeks instead of hours. Covenant monitoring falls behind because nobody has time to pull the reports.

The question isn't "is AI perfectly safe?" - it's "does this vendor's security posture meet my standards, and does the operational benefit justify the relationship?" For regulated institutions that need examiner-ready audit trails, enterprise-grade infrastructure, and contractual data protection guarantees, those are questions we've built specifically to answer.

FAQ: AI data security in commercial lending

Is loan data used to train AI models?

Not with enterprise AI platforms. Aloan processes loan documents through Google Cloud Vertex AI and AWS Bedrock, both of which contractually guarantee that customer data is never used to train AI models. Data is encrypted in transit (TLS 1.2+) and at rest (AES-256), processed in isolated environments, and deleted after processing.

What certifications should AI lending platforms have?

At minimum: SOC 2 Type II for the platform itself, with the infrastructure provider holding ISO 27001, FedRAMP authorization, and HIPAA compliance. Aloan holds SOC 2 Type II certification and its AI inference providers (Google Vertex AI, AWS Bedrock) hold SOC 2 Type II, ISO 27001, and FedRAMP. Look for documented data processing agreements, penetration testing results, and incident response procedures. The platform should also comply with FFIEC guidance and OCC supervisory expectations for third-party risk management.

How do bank examiners evaluate AI in commercial lending?

Examiners evaluate AI against existing frameworks: SR 11-7 (model risk management), OCC 2023-17 (third-party risk management), and FFIEC IT examination procedures. They look for model validation documentation, data governance policies, audit trails showing how AI outputs inform credit decisions, and evidence that the institution understands and can explain the AI's methodology.

What questions should banks ask AI vendors about data security?

Key questions: Where is data processed and stored? Is customer data ever used for model training? What encryption standards are used in transit and at rest? What audit trail exists for AI outputs? What certifications does the platform hold? How is data retention handled? Can you provide a data processing agreement? What happens during a security incident?

Want the Details?

Review our full AI Data Security page, or schedule a call to walk through our security architecture with your team. We can provide documentation of our security controls, provider certifications, and vendor due diligence packages on request.